Analytical Framework for Intelligence (AFI)

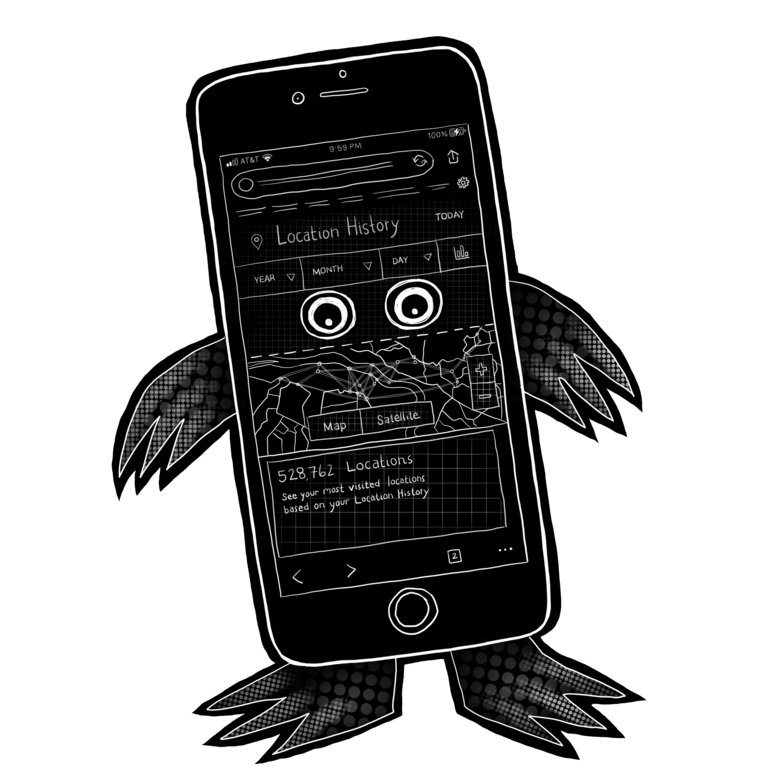

Analytical Framework for Intelligence (AFI) is a Palantir tool, uses profiling algorithms created for former President Trump’s “Extreme Vetting” initiativeFootnote 1 to “detect trends, patterns, and emerging threats, and identify non-obvious relationships between persons, events, and cargo.”Footnote 2 According to a 2012 PIA on AFI, “data is indexed so that the system may retrieve it by any identifier maintained in the record. As information is retrieved from multiple sources it may be joined to create a more complete representation of an event or concept. For example, a complex event such as a seizure that is represented by multiple records may be composed into a single object, for display, representing that event.”Footnote 3 In this way, AFI offers data visualization, and creates an index.

Among other data points, AFI uses and stores public records showing who owns land near the US - Mexico border, and ingests records on “individuals not implicated in activities in violation of laws enforced or administered by CBP but with pertinent knowledge of some circumstance of a case or record subject,” as well as those not accused of breaking immigration or other laws but who might have “knowledge of narcotics trafficking or related activities.”Footnote 4 In 2019, AFI made headlines when NBC reported that AFI was being used to mine US citizens’ social media posts on “caravans” and political rallies in order to train its targeting algorithm.Footnote 5

AFI provides tools like geospatial, temporal and statistical analysis, and advanced search capabilities of commercial databases and Internet sources, including social media and traditional news media. Like other DHS systems, AFI automatically collects and stores criminalizing data from travel records in ATS-UPAX, ESTA, ADIS, ICM/ TECS, records from immigration arrests and deportations, and lists of foreign students. AFI also copies volumes of such government data onto its own (Palantir) servers.Footnote 6 AFI uses Apache Hadoop, an open source framework that allows for faster searching across big datasets, which is hosted on the Amazon cloud. Hadoop requires continuous replication of data stored in multiple locations as well.Footnote 7